Elicit: The AI Research Assistant

Elicit is a literature assistant, primarily focused on “systematic reviews”.

Using embedding search, it will retrieve existing papers, so the issue of made-up references is avoided.

But, at the core there is a LLM, so the ability of understanding text is just based on the transformer power of soaking context, the results of Elicit must be evaluated carefully. In my experience (bioinformatics) this is particularly true as probably my field has poorly written papers and ambiguous use of language (due to the fact that is multidisciplinary and biologists and computer scientists use different terms for similar concepts).

How it works

Example prompt:

I’m interested in the use of large language models (e.g., GPT-4, Claude) for summarizing scientific research, especially in the biomedical field. Please find peer-reviewed studies that evaluate how factually accurate these models are when generating abstracts or summaries of full-text articles. Focus on papers that include benchmarking, error analysis, or human expert evaluation

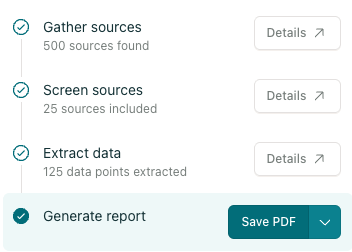

Elicit tries a multi step approach:

- Find sources: this is done with a “vector search”. The good thing is that it might retrieve papers you miss by keyword search. The papers retrieved, though, might not be 100% relevant and some obvious source might be missed

- Each paper is then screened. This is done with LLM, and Elicit tries to extract the information you care about. In my case, the presence of benchmark or human evaluation.

- Extract data: summarise the overall query from the text of the papers

- Write a report: this is the worst part, LLMs are very good at generating text. But you should do this, unfortunately LLMs are great writers, but not factually accurate or smart writers.

![]() Elicit output for “valuating LLMs in Biomedical Summarization”

Elicit output for “valuating LLMs in Biomedical Summarization”