Ollama & Python

Ollama is a powerful tool for running large language models locally, and when combined with Python, it enables sophisticated AI applications and automation.

What is Ollama?

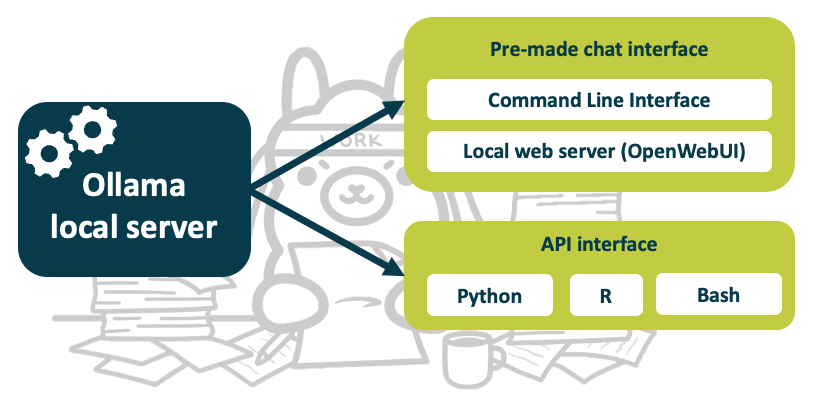

Ollama is a command-line tool and API server that:

- Simplifies Model Management: Easy installation and switching between models

- Provides API Access: RESTful API for programmatic integration (e.g. call LLM from your Python programs)

- Supports Multiple Models: Wide variety of open-source models (see here)

Installation

Install Ollama

Follow the official instructions to install Ollama, but experience using the command line is recommended

If you plan to use Ollama from Python, install its Python module too:

pip install ollama

Basic Ollama Usage

- Download a model

ollama pull qwen3:8b - To run a model (in a chat bot style, but from the terminal):

ollama run qwen3:8b

- What models do you have available?

ollama list

Python example

import ollama

# Simple chat (ensure the model exists!)

response = ollama.chat(model='qwen3:8b', messages=[

{

'role': 'user',

'content': 'Why is the sky blue?',

},

])

print(response['message']['content'])

Streaming Responses

import ollama

stream = ollama.chat(

model='qwen3:8b',

messages=[{'role': 'user', 'content': 'Tell me a story'}],

stream=True,

)

for chunk in stream:

print(chunk['message']['content'], end='', flush=True)

Generate Text

import ollama

response = ollama.generate(

model='qwen3:8b',

prompt='Write a haiku about programming'

)

print(response['response'])

Document Analysis Tool

import ollama

import os

def analyze_document(file_path, model='llama2'):

"""Analyze a text document using local LLM"""

with open(file_path, 'r', encoding='utf-8') as file:

content = file.read()

prompt = f"""

Please analyze the following document and provide:

1. A brief summary

2. Key themes

3. Important insights

Document:

{content}

"""

response = ollama.generate(model=model, prompt=prompt)

return response['response']

# Usage

analysis = analyze_document('report.txt')

print(analysis)

Previous submodule:

GPT4All

Next submodule:

Whisper.cpp (Transcription)