Responsible use of LLMs

Large Language Models (LLMs) like ChatGPT are exciting new tools for researchers, but using them well requires more than just typing in prompts.

As we saw earlier, LLMs are not meant to generate accurate text, and we cannot trust their output if used acrytically.

Taking responsibility

This slide from an IBM presentation was prepared in 1979. It’s incredibly actual: when we use LLMs we must take accountability and ownership.

But acknowledging openly the tools we used, if they played a role in generating core or original outputs (maybe not if only used for proofreading).

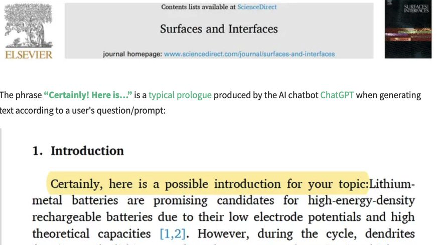

Some bad examples

The availability of high-throughput text generators, unfortunately, resulted in a flood of bogus research papers. Sometimes with evident sings of LLMs use that werent captured by the scrutiny of reviewers. This includes both text:

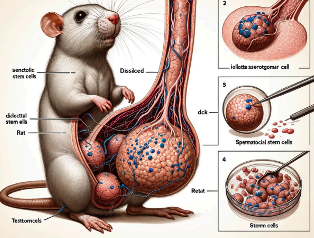

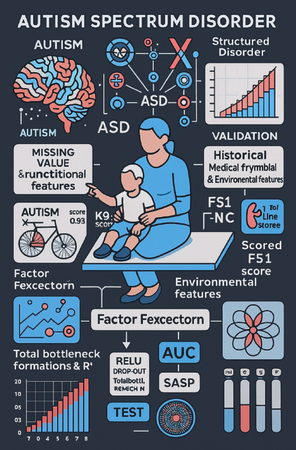

and even images like the infamous paper:

The mouse penis example was published in February 2024, and then retracted. Recently (November 2025), a new AI generated image was featured in a paper published on Scientific Reports, that will likely retract this soon:

Beyond the Magic Solution Myth

LLMs aren’t magic wands that solve all research problems automatically. They’re powerful tools that need careful, thoughtful use. Just like you wouldn’t use a microscope without learning proper technique, LLMs require understanding and skill to be truly useful.

![]() Key aspect: LLMs work best when combined with human expertise and critical thinking, not as replacements for careful research.

Key aspect: LLMs work best when combined with human expertise and critical thinking, not as replacements for careful research.

Three Foundation Pillars

1. Know Your Data and Biases

Before using any LLM, ask yourself:

- Who built this model and why?

- What data was it trained on?

- Whose voices are included—and whose are missing?

LLMs learn from existing text, which means they can amplify existing biases or dominant viewpoints while ignoring minority perspectives.

2. Maintain Scientific Rigor

LLMs can “hallucinate”—generate convincing but completely wrong information. This is dangerous in research where accuracy matters.

Best practices:

- Always verify LLM outputs with traditional methods

- Use domain-specific benchmarks to test results

- Document your verification process

- Embrace “slow science”—take time to check and reflect

3. Practice Transparency

Document the importan aspects of your use of LLMs:

- Which LLM you used and how

- What data sources informed your work

- How you verified the results

- What limitations you identified

![]() Key aspect: Transparency builds trust and enables other researchers to reproduce and build on your work.

Key aspect: Transparency builds trust and enables other researchers to reproduce and build on your work.

Your Responsibility as a Researcher

Using LLMs responsibly means:

- Maintaining healthy skepticism about AI outputs

- Being transparent about when and how you use AI tools

- Taking time to learn proper techniques

- Focusing on enhancing rather than replacing human expertise

![]() Remember: The goal isn’t to avoid LLMs, but to use them thoughtfully as part of rigorous, ethical research practices.

Remember: The goal isn’t to avoid LLMs, but to use them thoughtfully as part of rigorous, ethical research practices.

Toning down the AI hype

Since there are lots of hypes linked to LLMs, a great guide to re-scale the AI hype is a website by Carl Bergstrom and Jevin D. West: