Predicting the next word of a sentence

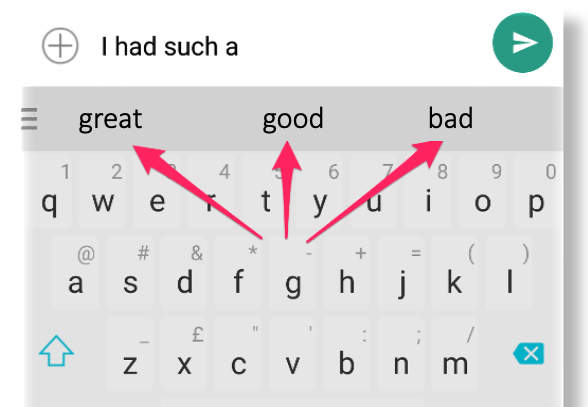

You have a simple language mode in your pockets: the keyboard of your mobile phone is programmed to suggest some possible words when you type a message.

This model is called n-gram and it’s trained on real texts, but just using a limited window (for example 3 words, in a 3-gram) and associates frequencies of observed triplets.

What are Large Language Models?

Large Language Models (LLMs) are a type of artificial intelligence system trained on vast amounts of text data to understand and generate human-like language.

![]() At their core, they are a powerful model to predict the next word, but thanks to a system prompt, they can be turned in chat bots

At their core, they are a powerful model to predict the next word, but thanks to a system prompt, they can be turned in chat bots

Inside a LLM (anthropic)

With two new papers, Anthropic’s researchers have taken significant steps towards understanding the circuits that underlie an AI model’s thoughts. This video summarises some findings: